Modern websites face an invisible bottleneck that most developers never see coming. While you’re optimizing code and tweaking CSS, there’s a hardware specification quietly dictating your site’s ultimate performance ceiling. It’s not the CPU, not the RAM, and definitely not the storage type—yet it accounts for more performance variability than all of them combined.

The conversation around server performance has traditionally focused on the obvious metrics: CPU cores, RAM capacity, and storage speed. These are the specs that populate comparison charts and marketing materials. But beneath this surface-level discussion lies a critical factor that most hosting providers and cloud engineers quietly optimize for while rarely mentioning it to customers.

Take Cloudflare’s recent Gen13 configuration, for example. Their engineers didn’t just upgrade processors—they fundamentally reconsidered how server components interact at the hardware level. This subtle shift in thinking reveals what truly separates high-performance infrastructure from the rest.

What Makes Server Hardware Actually Perform?

You might assume that faster processors automatically translate to better website performance. This is only partially true. The real performance bottleneck often lies in how efficiently data moves between components. Consider this: a server with premium components can still perform poorly if those components can’t communicate effectively.

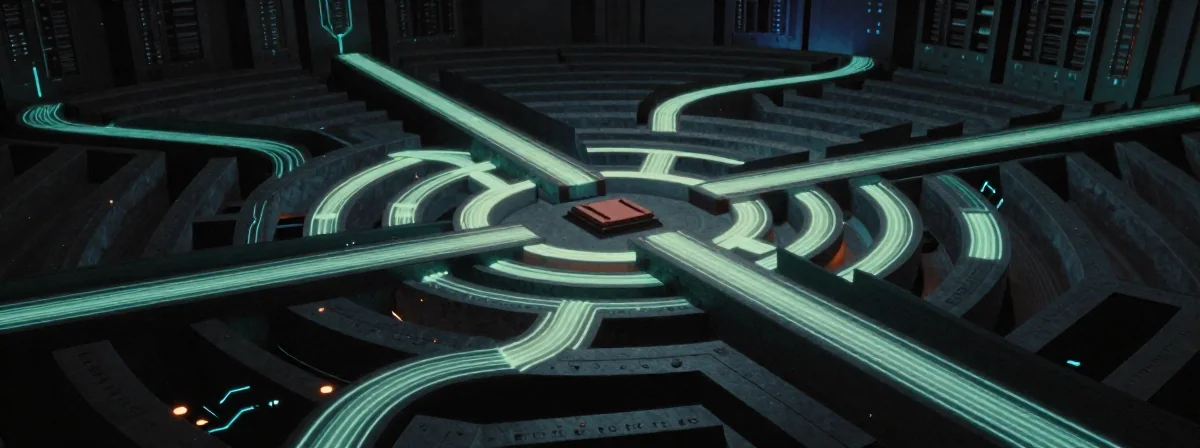

Think of it like a highway system. You could have the widest, smoothest roads (fast processors), but if the on-ramps and off-ramps are poorly designed (inefficient data pathways), traffic will still back up. Server performance works similarly—the interconnects and memory bandwidth often create the real constraints.

The Cloudflare blog post on Gen13 configuration highlights this precisely. They didn’t just add more powerful processors; they optimized the entire data flow architecture. This included reconsidering memory channel configurations, PCIe lane assignments, and even thermal management approaches that affect sustained performance.

Why Standard Benchmark Tests Miss the Mark

Most performance testing tools focus on isolated component metrics. They’ll measure CPU speed in isolation, storage IOPS separately, and network throughput independently. This creates a misleading picture of real-world performance, which is always about how these components work together under load.

Imagine evaluating a sports team by only looking at individual player statistics. You might see a star quarterback with perfect passing accuracy, but miss that the offensive line can’t protect him. Similarly, a server with top-tier components can still fail if the system architecture doesn’t support coordinated performance.

The hidden factor often comes down to memory bandwidth and latency. High-performance applications—especially those handling complex queries or large datasets—rely on consistent memory access patterns. A server might have abundant RAM, but if the memory controller can’t deliver data quickly enough, performance will suffer unpredictably.

How to Evaluate Server Performance Beyond the Specs Sheet

When assessing server hardware, look beyond the basic specifications. Ask about memory channel configurations, cache hierarchies, and how the system handles bursty workloads. These factors often determine whether a server can maintain consistent performance during traffic spikes.

Consider this practical test: run a sequence of operations that stress different components in succession. A well-designed server will show relatively consistent performance across these tests, while a poorly designed one will show dramatic fluctuations. This reveals how well the system manages resource contention.

The Cloudflare approach demonstrates this principle. Their Gen13 configuration wasn’t about adding more of everything; it was about creating a system where each component could operate at peak efficiency without creating bottlenecks elsewhere. This holistic approach is what separates truly high-performance infrastructure from merely well-spec’d equipment.

The Hidden Cost of Ignoring Hardware Architecture

Choosing server hardware based solely on component specifications can lead to unexpected performance issues. You might end up with servers that look great on paper but fail to deliver in production. These performance gaps often appear during peak traffic periods or when handling complex operations—exactly when reliability matters most.

The financial implications extend beyond just hardware costs. Downtime, slow response times, and inability to scale efficiently all stem from these architectural choices. What begins as a hardware decision becomes a business impact when performance doesn’t meet expectations.

Cloudflare’s detailed configuration post reveals how they approached this problem systematically. They didn’t just select components; they designed a system where components could work together efficiently. This approach requires deeper expertise than simply matching specifications to requirements.

What This Means for Your Next Hosting Decision

When evaluating hosting solutions or server configurations, don’t rely solely on component specifications. Ask about the system architecture, how components interact, and how the system handles peak loads. These questions reveal whether providers have considered the subtle factors that determine real-world performance.

Consider testing your applications on potential hosting environments under realistic conditions. The differences in performance may surprise you, often revealing that systems with seemingly inferior specifications outperform those with better specs due to superior architecture.

The most successful infrastructure decisions balance component specifications with system architecture. This holistic approach—evident in Cloudflare’s Gen13 configuration—recognizes that true performance comes from how components work together, not just how powerful each component is individually.

The Performance Secret That Changes Everything

The critical insight about server hardware isn’t about finding the absolute fastest components—it’s about understanding how those components work together. A system designed with this holistic approach can deliver consistent, reliable performance even when individual components aren’t technically the absolute best available.

When you look at server performance through this lens, the conversation shifts from component specifications to system architecture. This is why Cloudflare’s detailed configuration discussion matters—it reveals that performance engineering is about creating harmony between components, not just selecting the best individual parts.

Ultimately, the server hardware detail that quietly changes performance isn’t any single component—it’s the architectural philosophy that guides how those components interact. This is the factor that separates truly high-performance infrastructure from merely well-equipped systems, and it’s why thoughtful design often beats raw specifications every time.