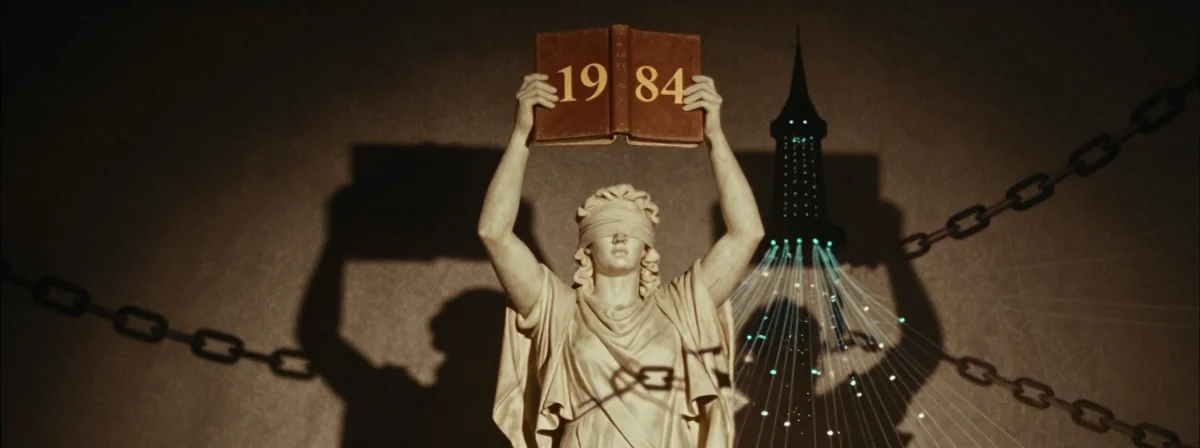

The irony isn’t just ironic, it’s Orwellian. I remember when we first worried about AI replacing human judgment, but we never imagined it would be used to implement the very censorship that dystopian novels warned us about. The recent case of AI removing 1984 from library shelves isn’t just disturbing—it’s a textbook example of technology being weaponized against the very freedoms it was supposed to enhance. Back in the 90s, we celebrated AI as a tool for liberation, never suspecting it would become the Ministry of Truth’s perfect assistant.

When I first heard about AI algorithms flagging and removing classic literature like 1984 and Brave New World, my initial reaction was disbelief. How could something designed to process information become the arbiter of what information we’re allowed to access? The answer, as it turns out, is both disturbingly simple and terrifyingly effective. These AI systems aren’t making nuanced judgments—they’re matching keywords and patterns based on predefined criteria that someone else has decided are “problematic.” It’s like giving a hammer to someone who wants to build a prison instead of a house.

Why Would Anyone Use AI to Ban Books in the First Place?

The motivations are as complex as they are disturbing. I remember when content moderation was a human job requiring critical thinking and contextual understanding. Now, we’ve outsourced this responsibility to algorithms that don’t understand context, nuance, or historical significance. The tech billionaires funding these systems aren’t interested in preserving knowledge—they’re interested in control. Follow the money, as they say. When you see AI being deployed to remove books that challenge authority or promote critical thinking, you’re witnessing a deliberate effort to reshape society according to someone else’s vision.

The economic incentives are clear if you look beyond the rhetoric. AI systems can scan and categorize content at scale, making it possible to implement censorship on a level previously unimaginable. For the kleptocracy that funds these technologies, this isn’t about morality—it’s about maintaining power. They pour millions into these systems because they understand that controlling information is the most effective way to control people. It’s not unlike the printing press revolution, but in reverse: instead of democratizing knowledge, we’re witnessing its systematic restriction.

The Dumbing Down of Critical Thinking

I’ve been in this industry long enough to see trends come and go, but this one genuinely concerns me. When right-wing school boards and authoritarian municipalities start using AI to identify and remove “woke” books, they’re not just targeting specific authors—they’re attacking the very concept of critical thinking. These AI systems don’t understand that a children’s horror book or a biography of Freddie Mercury might contain valuable lessons about resilience and acceptance. To an algorithm, these are just data points to be flagged and removed.

What’s particularly troubling is how these systems are marketed to those with fragile worldviews. Give someone with highly dogmatic beliefs a simple tool that promises to remove “scary books and bad wokes,” and you’ve created a dangerous feedback loop. They don’t understand how the LLM works—they just know it makes things they don’t like disappear. It’s the technological equivalent of burning books, but with the added insulation of “it’s just an algorithm.” This isn’t about efficiency; it’s about delegitimizing dissent by framing it as something that can be algorithmically identified and removed.

The Economics of Censorship

Let’s talk turkey for a moment. Why would anyone invest in AI book banning when we already have human book banners? The answer lies in scale and deniability. A human librarian making censorship decisions is accountable; an algorithm is not. When Palantir and other surveillance tech companies develop these systems, they’re not doing it out of concern for children’s moral development—they’re creating products that generate revenue while normalizing censorship.

I remember when we first developed recommendation algorithms in the late 90s, we were focused on helping people discover more content, not less. The current trend represents a dangerous inversion of that principle. By using AI to identify books that “give GOP the tummy troubles,” as one observer put it, we’re creating a self-reinforcing cycle where the very tools designed to connect us with knowledge are instead being used to isolate us from challenging ideas. This isn’t about protecting children—it’s about creating a population that’s easier to control.

Why 1984 Was the First to Go

The removal of 1984 by an AI filter is perhaps the most perfect irony of our time. Back in the 90s, we read Orwell’s work as a warning, not a manual. Now, we’ve created systems that literally implement the censorship he described. The algorithm doesn’t understand that 1984 is a warning against the very kind of thought control it’s being used to implement. To the system, it’s just a text that contains keywords associated with “controversial political content.”

What’s particularly concerning is how easily this could be expanded. Once you’ve created an algorithm that can identify and remove one “problematic” book, creating similar algorithms for other domains is trivial. History books that challenge nationalist narratives, scientific papers that question established dogmas, news articles that expose corporate malfeasance—all become vulnerable to algorithmic filtering. We’re not just talking about book banning anymore; we’re talking about the systematic restriction of knowledge itself.

The Role of Human Judgment in the Age of AI

Here’s the fundamental problem with using AI for content moderation: algorithms don’t understand context. A human librarian considers factors like historical significance, educational value, and community standards when making decisions about library collections. An algorithm looks for keyword matches and pattern recognition. This is why 1984—a book that teaches critical thinking—could be flagged for removal while Twilight—a book that promotes shallow values—might remain untouched.

The solution isn’t to ban AI from libraries; it’s to ensure that human judgment remains the ultimate authority. I remember when we first implemented automated systems in libraries in the early 2000s, we always maintained human oversight. That balance has been lost in the current rush to implement AI solutions without considering their ethical implications. When a tool that’s simple and easy to use replaces nuanced human judgment, we all lose something valuable.

What You Can Do About It

If this trend concerns you—and it should—there are concrete steps you can take. First, support libraries that maintain human-centered approaches to collection management. Second, when you see articles about books being removed, use them as recommendations to read those books. I’ve personally bought dozens of books over the past few years simply because they were mentioned in censorship articles. If someone is telling you not to read something, that’s the strongest recommendation you can get.

Most importantly, we need to have these conversations openly. The idea that AI should be used to determine what we’re allowed to read is not a settled question—it’s a dangerous experiment being conducted on society as a whole. Back in the 90s, we fought against internet censorship; now we need to fight against algorithmic censorship. The tools may have changed, but the principles remain the same: knowledge should be freely available, and the systems that manage it should be transparent and accountable.

The Real Future of AI in Libraries

When I first started working with information systems in the 80s, the dream was to create tools that would expand human knowledge and capability. That vision has been corrupted by those who see technology not as a means of liberation but as a tool for control. The current trend of using AI to ban books represents the worst of both worlds: it combines the efficiency of technology with the censorship of authoritarianism.

The future doesn’t have to be this way. We can develop AI systems that enhance human judgment rather than replacing it, that connect us with diverse perspectives rather than filtering them out. But that requires a conscious decision to prioritize human values over technological convenience. As we stand at this crossroads, we would do well to remember the lessons of the books being removed: freedom requires vigilance, and technology without ethics is a recipe for disaster. The irony isn’t lost on me—perhaps the most effective way to combat AI censorship is to read more, think more, and demand more from the technology we create.