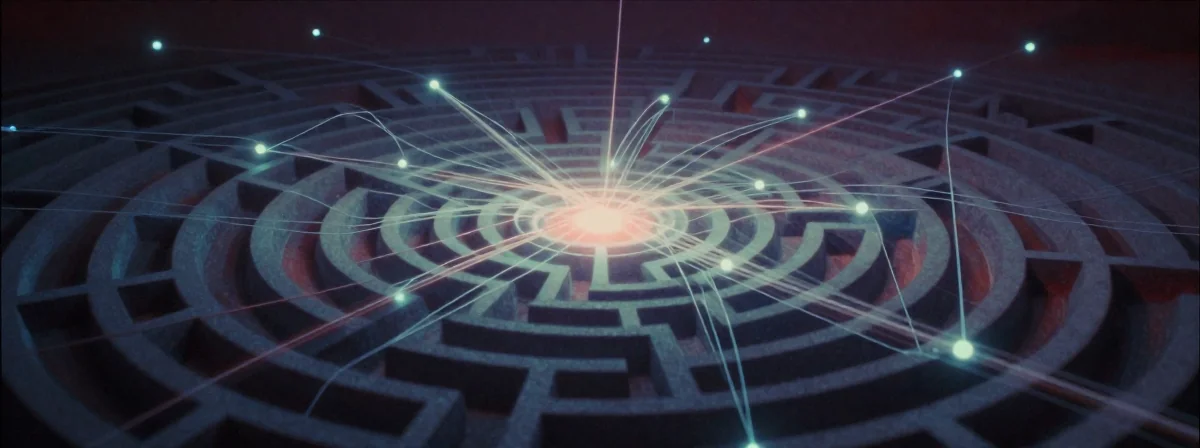

Every day you’re bombarded with content designed to capture your attention. Those personalized recommendations, the endless scroll, the notifications that demand your immediate focus—these aren’t accidental features. They’re meticulously engineered systems built to maximize engagement at any cost. The truth is, your online behavior is being shaped by invisible forces that few understand.

Digital platforms have transformed from simple content providers into sophisticated behavioral modification tools. What started as innocent ways to connect has evolved into an ecosystem where user retention metrics outweigh user wellbeing. The mechanisms driving this transformation are complex, but their effects are undeniable—persistent distraction, compulsive checking, and a growing sense of being controlled by invisible forces.

Consider this: the average person now spends over six hours daily consuming digital content. That’s more time than most spend sleeping. Something fundamental has shifted in how we interact with technology, and understanding these shifts begins with recognizing the invisible architects of our digital experiences.

How Digital Platforms Hijack Your Attention

The core mechanism behind this behavioral control is surprisingly simple yet devastatingly effective. Platforms employ algorithms specifically designed to identify and exploit psychological vulnerabilities. These aren’t random suggestions—they’re calculated manipulations targeting your brain’s reward pathways. The more time you spend, the more data they collect, creating a feedback loop that becomes increasingly personalized and addictive.

Take recommendation systems, for instance. They don’t just suggest content you might like—they engineer experiences that keep you engaged longer. This explains why your YouTube home page shows 90% unrelated content despite your subscriptions, or why social feeds mix subscribed content with algorithmic suggestions. The goal isn’t satisfaction—it’s maximized screen time.

The most insidious aspect is how these systems adapt to your specific vulnerabilities. If you respond strongly to emotional content, you’ll see more of it. If you’re prone to comparison, you’ll encounter more curated lifestyles. The system learns what keeps you hooked and doubles down on those patterns.

The Business Model Nobody Talks About

What’s rarely discussed is that most platforms are fundamentally advertising businesses disguised as content providers. Their primary product isn’t content or even the platform itself—it’s your attention. Every click, scroll, and interaction becomes data points sold to marketers, creating a profitable cycle of attention harvesting.

This business model creates an inherent conflict of interest. Features that maximize engagement often undermine user wellbeing. The “dopamine hits” from notifications, the endless scroll design, the infinite content stream—all serve advertising metrics before serving user needs. It’s a system where your satisfaction is secondary to engagement metrics.

Even platforms that allow customization options rarely present them clearly. The ability to turn off algorithmic recommendations exists, but it’s buried in settings menus most users never find. This isn’t an oversight—it’s intentional design. If users understood how they were being manipulated, they might resist.

The Legal Battle Over Digital Addiction

Recent court decisions reveal a potential turning point in how society addresses these manipulative practices. When courts begin treating addictive design as harmful rather than just effective, the industry faces unprecedented liability. The precedent being set could expose platforms to millions of claims from users harmed by these practices.

The legal arguments center on a simple but powerful concept: if a company designs a product specifically to create addiction, and that addiction causes demonstrable harm, the company should be held accountable. This mirrors historical battles against tobacco companies that initially denied their products were addictive before facing regulation and liability.

What makes this legal landscape particularly significant is its potential scope. Unlike specific content regulations, these cases target the fundamental design practices that create addictive behaviors across platforms. This could force fundamental changes in how digital products are developed, moving beyond surface-level adjustments to address core addictive mechanisms.

Practical Steps to Regain Control

Understanding these mechanisms is only useful if it leads to meaningful change in your digital habits. The good news is that awareness creates opportunity. By recognizing how platforms manipulate behavior, you can implement countermeasures that restore agency to your digital experiences.

Start by auditing your digital environment. Identify which platforms consistently leave you feeling drained or distracted. Then, systematically adjust your relationship with these tools. This might mean turning off notifications, setting time limits, or using browser extensions that block addictive patterns.

For those who want deeper change, consider implementing digital detox periods. Completely removing apps from your phone for 24-48 hours can recalibrate your relationship with technology. Many report discovering they can function effectively with less digital input than they previously believed.

The Future of Digital Interaction

The ongoing legal battles and growing awareness about digital manipulation suggest we’re at a crossroads. One path continues the current trajectory of increasingly addictive platforms that prioritize engagement over wellbeing. The alternative involves developing ethical design standards that put user wellbeing on par with business metrics.

What’s clear is that change won’t come from platforms voluntarily reforming—they have too much to lose from changing their most profitable features. Instead, meaningful change will require regulatory pressure, legal challenges, and collective consumer demand for healthier digital experiences.

The most powerful tool in this transformation is awareness itself. When enough people understand how these systems work and demand better, the economic incentives shift. Platforms that prioritize user wellbeing over engagement metrics will gain competitive advantage, creating a market incentive for healthier design.

Until then, the responsibility falls to individual users to understand these mechanisms and implement personal boundaries. The good news is that even small changes in awareness and behavior can significantly reduce the manipulative power of digital platforms. The first step is always recognizing that you’re not just using technology—you’re participating in a carefully designed system that’s often working against your best interests.